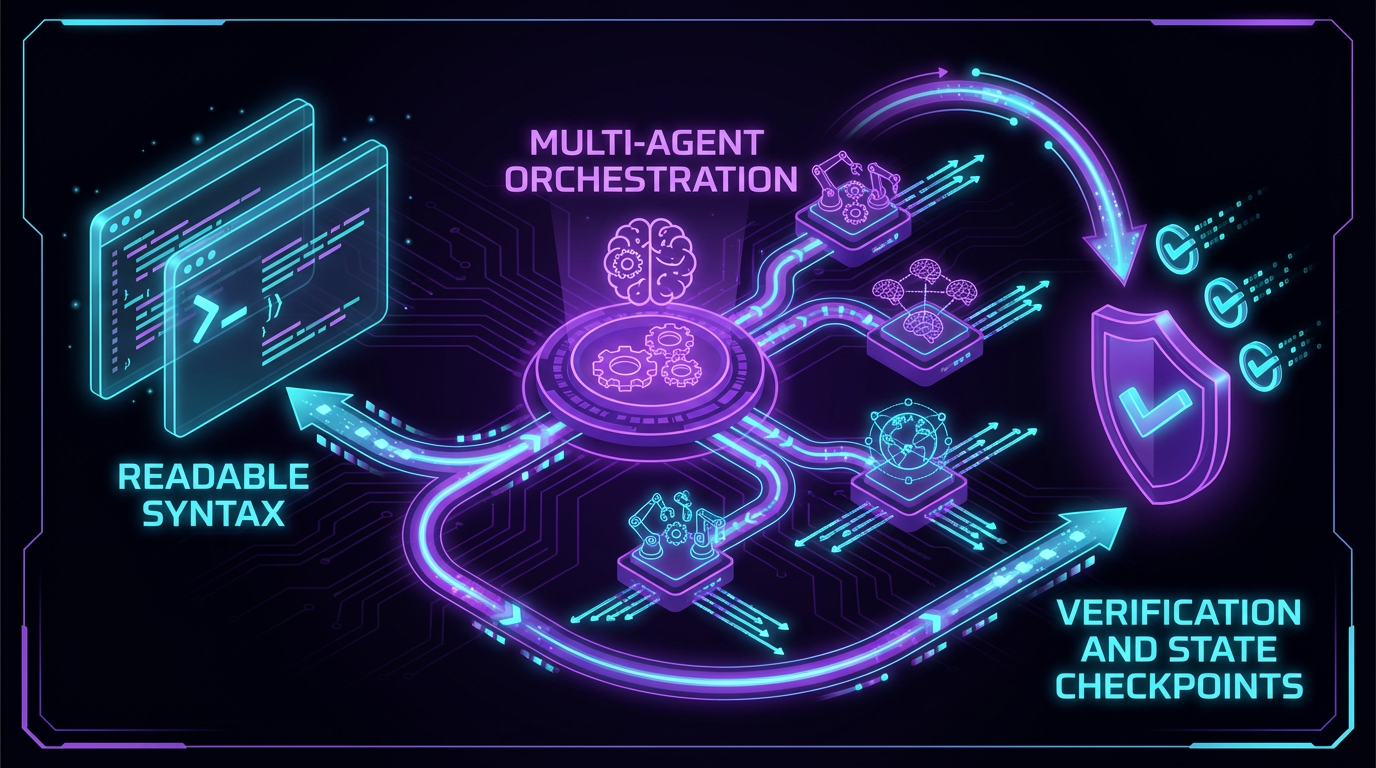

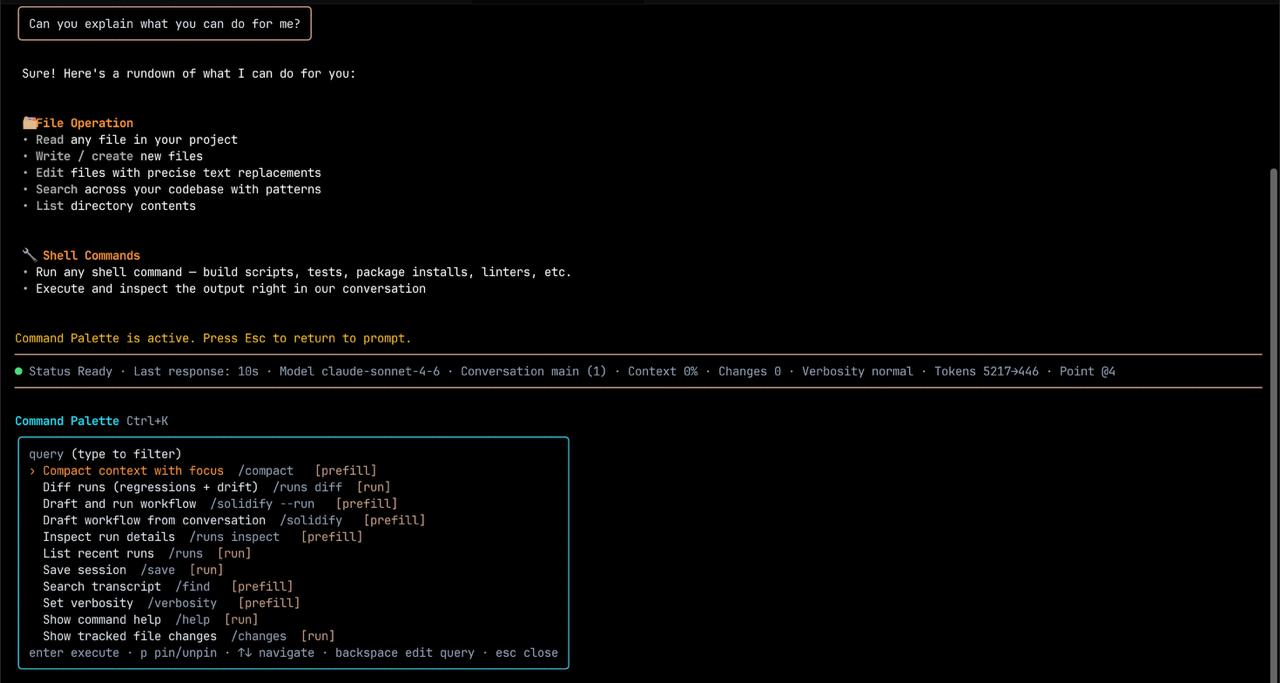

Launch from terminal-first workflows

Run orchestrated jobs locally, checkpoint progress, and resume from verified states without replacing your current tooling.

Cognitive Language Runtime

Coglan is a cognitive programming language and coding-agent environment.

The .cog format gives teams structured, resumable, and verifiable workflows that run across

CLI, TUI, VS Code, CI/CD, MCP, or any tool wired to the runtime.

Start Anywhere

Teams can keep their existing environment and share one .cog contract everywhere.

Runtime behavior, checkpoints, and gate semantics stay consistent across each execution surface.

Run orchestrated jobs locally, checkpoint progress, and resume from verified states without replacing your current tooling.

Follow agent execution, render technical markdown, and review evidence from a live terminal dashboard.

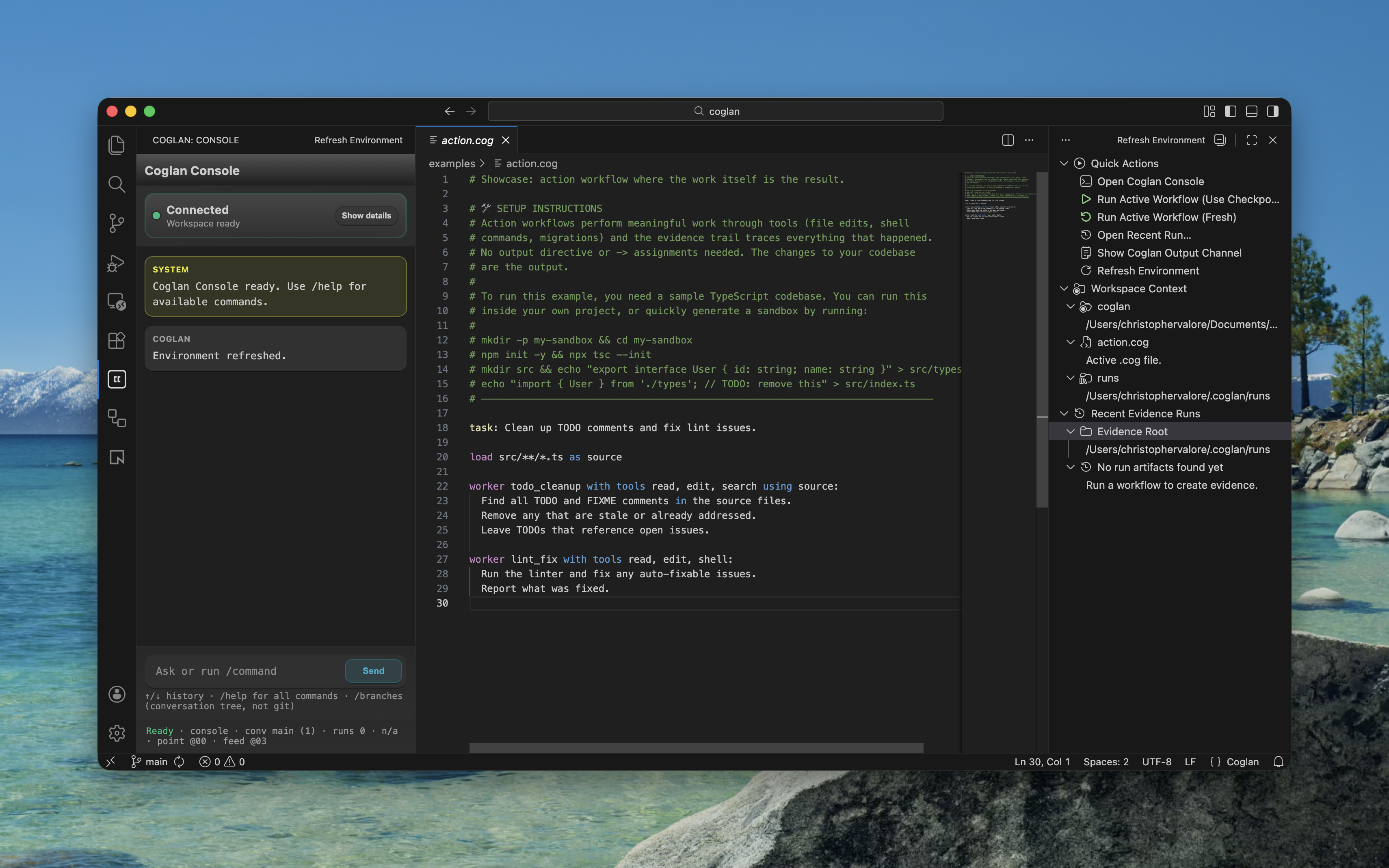

Run workflows from the editor sidebar with hover docs and artifact visibility where your team already ships software.

Core Syntax

Coglan keeps orchestration, validation, and output shaping in a single workflow definition. Every stage is auditable, and dependencies can be resumed with predictable behavior.

# 1. Load project metadata into context

load package.json as pkg

# 2. Autonomous worker investigates the project

worker health_check with tools read, shell using pkg:

Assess this project's health. Check:

- Git state (current branch, clean or dirty working tree)

- Dependency health (outdated or missing packages)

Return JSON with health (ready/caution/blocked),

findings as an array, and recommendations.

-> report

# 3. Gate enforces output structure

check report:

report has health, findings, recommendations

every finding has area, status, detail

# 4. Write verified results to a file

output report as json to reports/health-check.jsonload package.json as pkg

package.json from project rootpkg project metadataInjects project metadata so the worker has real context to investigate against.

worker health_check with tools read, shell using pkg -> report

pkg metadata + read, shell toolsreport structured JSON assessmentThe worker runs shell commands and reads files autonomously to assess project health.

report has health, findings, recommendations; every finding has area, status, detail

report from workerhealth, findings, recommendations; each finding needs area, status, detailRetries automatically with specific feedback if the worker output is missing required fields.

output report as json to reports/health-check.json

reports/health-check.json~/.coglan/runs/Verified report lands in a named file alongside a complete evidence trail.

Advanced Routing

In Coglan, the language is the router. Use Gemini 3.1 Pro for massive-context log ingestion, Opus 4.6 for deep architectural reasoning, and Sonnet 4.6 for fast, highly steerable synthesis, while maintaining one transparent verification chain.

# 1. Import raw logs from your runtime source

call shell:cat ./logs/app.log -> raw_logs

# 2. Convert raw logs into structured critical events

pass triage with model google/gemini-3.1-pro-preview using raw_logs:

Extract all critical crash events as strict JSON.

-> critical_events

check critical_events:

every event has timestamp, error_code, stack_trace

at least 1 event

# 3. Use deep reasoning to identify root cause

worker deep_research with model anthropic/claude-opus-4.6 using critical_events:

Return a detailed JSON architectural report.

-> architecture_report

check architecture_report:

has_keys root_cause, recommended_fix_architecture

# 4. Synthesize a concise executive brief

worker executive_summary with model anthropic/claude-sonnet-4.6 using architecture_report:

Return strict JSON with keys summary, root_cause, recommended_fix_architecture.

-> final_brief

check final_brief:

has_keys summary, root_cause, recommended_fix_architecture

# 5. Render verified data as a readable report

pass format_brief using final_brief:

Write a clear incident report from this data with headings

for Summary, Root Cause, and Recommended Fix.

-> incident_report

output incident_report as markdown to reports/incident-brief.mdcall shell:cat ./logs/app.log -> raw_logs

./logs/app.log runtime log streamraw_logs unfiltered telemetry textLoads log data into the workflow context so downstream model steps operate on explicit inputs.

pass triage with model google/gemini-3.1-pro-preview using raw_logs -> critical_events

raw_logs (unfiltered telemetry)critical_events strict JSON arrayUses large context capacity to convert noisy logs into structured incidents ready for deeper analysis.

every event has timestamp, error_code, stack_trace; at least 1 event

critical_events arrayEnsures the architecture model receives complete, machine-checkable incident records.

worker deep_research with model anthropic/claude-opus-4.6 using critical_events -> architecture_report

critical_eventsarchitecture_report JSON diagnosisConverts event-level evidence into architectural conclusions and clear remediation paths.

check architecture_report: has_keys root_cause, recommended_fix_architecture

architecture_reportKeeps synthesis grounded in complete analysis output.

worker executive_summary with model anthropic/claude-sonnet-4.6 using architecture_report -> final_brief

architecture_reportfinal_brief strict JSON briefCreates a direct, evidence-first brief that leadership can act on immediately.

check final_brief: has_keys summary, root_cause, recommended_fix_architecture

final_brief structured summaryGuarantees the final artifact carries the exact fields needed for downstream decisions.

pass format_brief using final_brief -> incident_report

final_brief verified JSONincident_report readable markdownGates verified the structure. This step renders it as a clear report with headings for Summary, Root Cause, and Recommended Fix.

output incident_report as markdown to reports/incident-brief.md

reports/incident-brief.md for incident reviews, handoffs, and ticketingCompletes a multi-model run with a human-readable report and a verifiable execution trail.

Why Coglan?

Coglan lets you route one step to a frontier reasoning model and the next to a fast extraction model, all declared in readable, natively orchestrated syntax. Checkpoints anchor progress so complex multi-agent workflows can resume seamlessly from any verified state.

Coglan introduces semantic gates that act like a type system for LLM output. Assertions derive schema intent from plain English, shifting teams from "review everything manually" to "verify every step by contract."

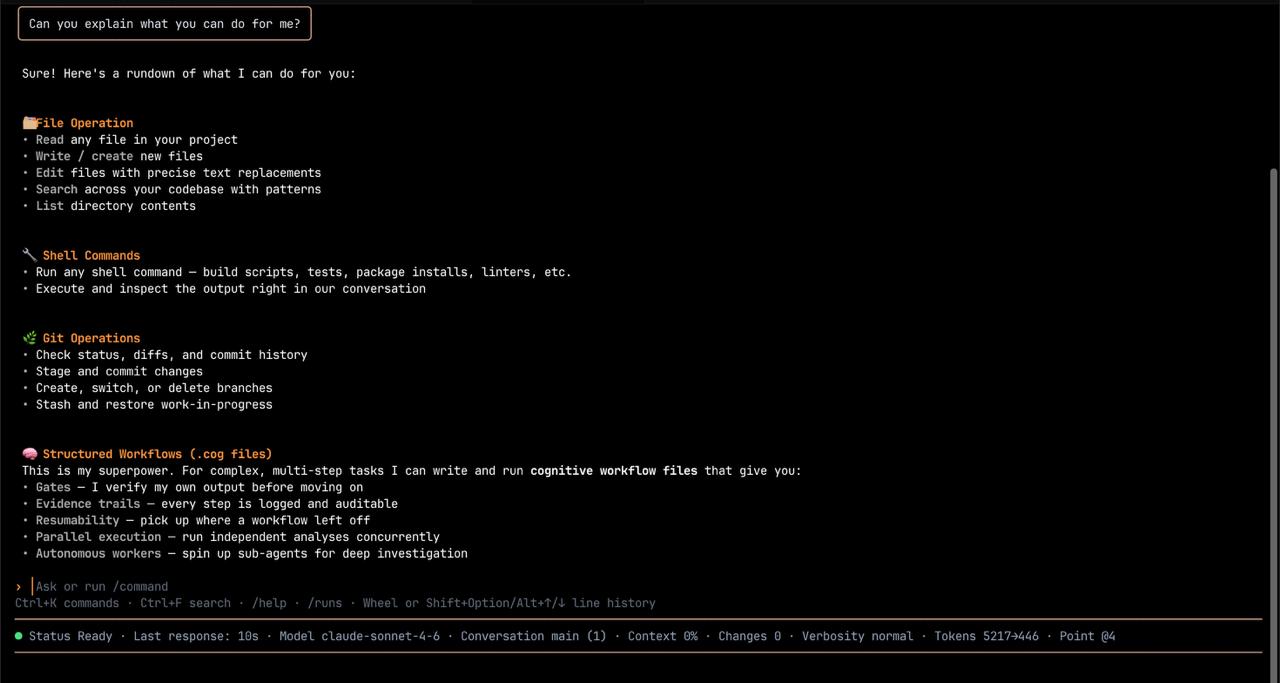

Product Surface

Coglan provides seven step types, structural primitives for control flow and scoping, and a compact syntax readable by humans, models, and the runtime.

Follow worker progress, inspect markdown artifacts, fork conversations when needed, and persist execution state safely inside the runtime loop.

Run the full Coglan console inside a VS Code sidebar with hover docs and local evidence visibility right beside your source code.

The Self-Programming Loop

.cog workflows directly.

Models can write .cog because the syntax is compact and grounded in natural language.

Coglan's entire syntax fits in a single System Prompt, creating a recursive loop where agents can plan, execute, verify, and continue with confidence.

An agent encounters a complex task, writes a .cog file, and runs it directly through the full runtime.

Verified results flow back into the conversation, so each next decision starts from trusted context.

Community & Adoption

The core runtime, CLI, TUI, VS Code extension, GitHub Actions, and example library stay free and extensible.

Whether you're experimenting solo or adopting Coglan across a company, team, or organization, I'd love to hear what is working, what feels promising, and where you want to go next.

Prefer direct email? christopher@arboretumconsulting.io